With Ubuntu 19.10 Desktop there is finally (experimental) ZFS setup option or option to install ZFS manually. However, getting Ubuntu Server installed on ZFS is still full of manual steps. Steps here follow my desktop guide closely and assume you want UEFI setup.

With Ubuntu 19.10 Desktop there is finally (experimental) ZFS setup option or option to install ZFS manually. However, getting Ubuntu Server installed on ZFS is still full of manual steps. Steps here follow my desktop guide closely and assume you want UEFI setup.

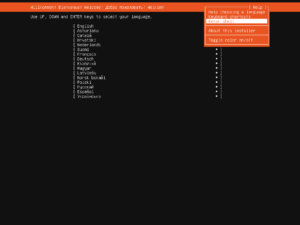

Entering root prompt from within Ubuntu Server installation is not hard if you know where to look. Just find Enter Shell behind Help menu item (Shift+Tab comes in handy).

The very first step should be setting up few variables - disk, pool, host name, and user name. This way we can use them going forward and avoid accidental mistakes. Just make sure to replace these values with ones appropriate for your system.

TerminalDISK=/dev/disk/by-id/ata_disk

POOL=ubuntu

HOST=server

USER=user

To start the fun we need debootstrap and zfsutils-linux package. Unlike desktop installation, ZFS pacakage is not installed by default.

Terminalapt install --yes debootstrap zfsutils-linux

General idea of my disk setup is to maximize amount of space available for pool with the minimum of supporting partitions. If you are planning to have multiple kernels, increasing boot partition size might be a good idea. Major change as compared to my previous guide is partition numbering. While having partition layout different than partition order had its advantages, a lot of partition editing tools would simply "correct" the partition order to match layout and thus cause issues down the road.

Terminalsgdisk --zap-all $DISK

sgdisk -n1:1M:+511M -t1:8300 -c1:Boot $DISK

sgdisk -n1:0:+128M -t2:EF00 -c2:EFI $DISK

sgdisk -n3:0:0 -t3:8309 -c3:Ubuntu $DISK

sgdisk --print $DISK

Unless there is a major reason otherwise, I like to use disk encryption.

Terminalcryptsetup luksFormat -q --cipher aes-xts-plain64 --key-size 512 \

--pbkdf pbkdf2 --hash sha256 $DISK-part3

Of course, you should also then open device. I like to use disk name as the name of mapped device, but really anything goes.

TerminalLUKSNAME=`basename $DISK`

cryptsetup luksOpen $DISK-part3 $LUKSNAME

Finally we're ready to create system ZFS pool.

Terminalzpool create -o ashift=12 -O compression=lz4 -O normalization=formD \

-O acltype=posixacl -O xattr=sa -O dnodesize=auto -O atime=off \

-O canmount=off -O mountpoint=none -R /mnt/install $POOL /dev/mapper/$LUKSNAME

zfs create -o canmount=noauto -o mountpoint=/ $POOL/root

zfs mount $POOL/root

Assuming UEFI boot, two additional partitions are needed. One for EFI and one for booting. Unlike what you get with the official guide, here I don't have ZFS pool for boot partition but a plain old ext4. I find potential fixup works better that way and there is a better boot compatibility. If you are thinking about mirroring, making it bigger and ZFS might be a good idea. For a single disk, ext4 will do.

Terminalyes | mkfs.ext4 $DISK-part1

mkdir /mnt/install/boot

mount $DISK-part1 /mnt/install/boot/

mkfs.msdos -F 32 -n EFI $DISK-part2

mkdir /mnt/install/boot/efi

mount $DISK-part2 /mnt/install/boot/efi

Bootstrapping Ubuntu on the newly created pool is next. As we're dealing with server you can consider using --variant=minbase rather than the full Debian system. I personally don't see much value in that as other packages get installed as dependencies anyhow. In any case, this will take a while.

Terminaldebootstrap eoan /mnt/install/

zfs set devices=off $POOL

Our newly copied system is lacking a few files and we should make sure they exist before proceeding.

Terminalecho $HOST > /mnt/install/etc/hostname

sed "s/ubuntu-server/$HOST/" /etc/hosts > /mnt/install/etc/hosts

sed '/cdrom/d' /etc/apt/sources.list > /mnt/install/etc/apt/sources.list

cp /etc/netplan/*.yaml /mnt/install/etc/netplan/

Finally we're ready to "chroot" into our new system.

Terminalmount --rbind /dev /mnt/install/dev

mount --rbind /proc /mnt/install/proc

mount --rbind /sys /mnt/install/sys

chroot /mnt/install \

/usr/bin/env DISK=$DISK POOL=$POOL USER=$USER LUKSNAME=$LUKSNAME \

bash --login

Let's not forget to setup locale and time zone. If you opted for minbase you can either skip this step or manually install locales and tzdata packages.

Terminallocale-gen --purge "en_US.UTF-8"

update-locale LANG=en_US.UTF-8 LANGUAGE=en_US

dpkg-reconfigure --frontend noninteractive locales

dpkg-reconfigure tzdata

Now we're ready to onboard the latest Linux image.

Terminalapt update

apt install --yes --no-install-recommends linux-image-generic linux-headers-generic

Followed by boot environment packages.

Terminalapt install --yes zfs-initramfs cryptsetup keyutils grub-efi-amd64-signed shim-signed

If there are multiple encrypted drives or partitions, keyscript really comes in handy to open them all with the same password. As it doesn't have negative consequences, I just add it even for a single disk setup.

Terminalecho "$LUKSNAME UUID=$(blkid -s UUID -o value $DISK-part3) none \

luks,discard,initramfs,keyscript=decrypt_keyctl" >> /etc/crypttab

cat /etc/crypttab

To mount EFI and boot partitions, we need to do some fstab setup too:

Terminalecho "PARTUUID=$(blkid -s PARTUUID -o value $DISK-part1) \

/boot ext4 noatime,nofail,x-systemd.device-timeout=1 0 1" >> /etc/fstab

echo "PARTUUID=$(blkid -s PARTUUID -o value $DISK-part2) \

/boot/efi vfat noatime,nofail,x-systemd.device-timeout=1 0 1" >> /etc/fstab

cat /etc/fstab

Now we get grub started and update our boot environment. Due to Ubuntu 19.10 having some kernel version kerfuffle, we need to manually create initramfs image. As before, boot cryptsetup discovery errors during mkinitramfs and update-initramfs as OK.

TerminalKERNEL=`ls /usr/lib/modules/ | cut -d/ -f1 | sed 's/linux-image-//'`

update-initramfs -u -k $KERNEL

Grub update is what makes EFI tick.

Terminalupdate-grub

grub-install --target=x86_64-efi --efi-directory=/boot/efi --bootloader-id=Ubuntu \

--recheck --no-floppy

Since we're dealing with computer that will most probably be used without screen, it makes sense to install OpenSSH Server.

Terminalapt install --yes openssh-server

I also prefer to allow remote root login. Yes, you can create a sudo user and have root unreachable but that's just swapping one security issue for another. Root user secured with key is plenty safe.

Terminalsed -i '/^#PermitRootLogin/s/^.//' /etc/ssh/sshd_config

mkdir /root/.ssh

echo "<mykey>" >> /root/.ssh/authorized_keys

chmod 644 /root/.ssh/authorized_keys

If you're willing to deal with passwords, you can allow them too by changing both PasswordAuthentication and PermitRootLogin parameter. I personally don't do this.

Terminalsed -i '/^#PasswordAuthentication yes/s/^.//' /etc/ssh/sshd_config

sed -i '/^#PermitRootLogin/s/^.//' /etc/ssh/sshd_config

sed -i 's/^PermitRootLogin prohibit-password/PermitRootLogin yes/' /etc/ssh/sshd_config

passwd

Short package upgrade will not hurt.

Terminalapt dist-upgrade --yes

We can omit creation of the swap dataset but I personally find its good to have it just in case.

Terminalzfs create -V 4G -b $(getconf PAGESIZE) -o compression=off -o logbias=throughput \

-o sync=always -o primarycache=metadata -o secondarycache=none $POOL/swap

mkswap -f /dev/zvol/$POOL/swap

echo "/dev/zvol/$POOL/swap none swap defaults 0 0" >> /etc/fstab

echo RESUME=none > /etc/initramfs-tools/conf.d/resume

If one is so inclined, /home directory can get a separate dataset too.

Terminalrmdir /home

zfs create -o mountpoint=/home $POOL/home

And now we create the user.

Terminaladduser $USER

The only remaining task before restart is to assign extra groups to user and make sure its home has correct owner.

Terminalusermod -a -G adm,cdrom,dip,plugdev,sudo $USER

chown -R $USER:$USER /home/$USER

Consider enabling firewall:

Terminalapt install --yes man iptables iptables-persistent

While you can go wild with firewall rules, I like to keep them simple to start with. All outgoing traffic is allowed while incoming traffic is limited to new SSH connections and responses to the already established ones.

Terminalsudo apt install --yes man iptables iptables-persistent

for IPTABLES_CMD in "iptables" "ip6tables"; do

$IPTABLES_CMD -F

$IPTABLES_CMD -X

$IPTABLES_CMD -Z

$IPTABLES_CMD -P INPUT DROP

$IPTABLES_CMD -P FORWARD DROP

$IPTABLES_CMD -P OUTPUT ACCEPT

$IPTABLES_CMD -A INPUT -i lo -j ACCEPT

$IPTABLES_CMD -A INPUT -m conntrack --ctstate ESTABLISHED,RELATED -j ACCEPT

$IPTABLES_CMD -A INPUT -p tcp --dport 22 -j ACCEPT

done

iptables -A INPUT -p icmp -j ACCEPT

ip6tables -A INPUT -p ipv6-icmp -j ACCEPT

netfilter-persistent save

As install is ready, we can exit our chroot environment.

Terminalexit

And cleanup our mount points.

Terminalumount /mnt/install/boot/efi

umount /mnt/install/boot

mount | grep -v zfs | tac | awk '/\/mnt/ {print $3}' | xargs -i{} umount -lf {}

zpool export -a

After the reboot you should be able to enjoy your installation.

Terminalreboot

[2020-06-12: Increased partition size to 511+128 MB (was 384+127 MB before)]

Hi there Josip!

Can you please clarify which installation media are you using for this tutorial and for https://www.medo64.com/2019/12/zfs-ubuntu-server-19-10-without-encryption/?

I’ve tried Ubuntu Server 19.10 Live installer and Alternative installer but I’m not sure what you are using…

Thanks.

I am using ubuntu-19.10-live-server-amd64.iso

This is an excellent guide! Thank you.

Hi,

There is one thing I would add this perfect guide. On a server, it is not always a good idea to ask for a passphrase for encryption. It is better to read the key from a file / HSM. Do you have a quick solution for this? Where should I put this keyfile when the whole disk is encrypted? I’m trying to figure it out by myself, but I’m still thinking :)

Also, why ZFS native encryption is not an option? Have you ever tried this?

Thanks

Unfortunately, this is a huge issue and I haven’t found a good solution for this. One could use external drive (maybe even one that self-erases if removed, like TmpUsb) but that leaves the problem of key being available on running system. Albeit it might be better than nothing if you really cannot enter the password.