For it's XML based file formats (XML Paper Specification and Office Open XML) Microsoft used zip file as storage (not a new thing - lot of manufacturers did use it before). If one is using .NET Framework, support already comes built-in (i think from version 3.0 but I didn't bother to check - if you have 3.5, you are on safe side).

Since every package file can consist of multiple parts (look on it as files inside of package) it seemed just great for one project of mine.

ZipPackage

Class that handles that all is ZipPackage. It uses little bit newer specification of zip format so support for >4GB files is not an issue (try to do that with GZipStream class). Although underlying format is zip, it is rare to see zip extension since almost every application defines it's own. That makes linking files and program a lot easier.

Class that handles that all is ZipPackage. It uses little bit newer specification of zip format so support for >4GB files is not an issue (try to do that with GZipStream class). Although underlying format is zip, it is rare to see zip extension since almost every application defines it's own. That makes linking files and program a lot easier.

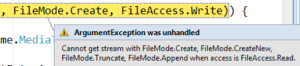

But there is (in my opinion of course) huge stupidity in way that writing streams is handled. Every stream first goes to Isolated Storage folder which resides on system drive. Why is that problem?

Imagine scenario where your system drive is C: and you want to create file on drive D: (which may as well be over network). First everything is created on drive C: and then copied to drive D:. That means that whatever amount of data you write, this class writes almost twice as much on disk - once uncompressed data goes on C: drive and afterwards gets compressed on drive D:.

That also means that not only you need to watch amount of disk space on your final destination but also your system drive needs to have enough space to hold total uncompressed content.

Even worse, if program crashes during making of package, you will get orphaned file in isolated storage. That may not be an issue at that moment but after a while system may complain about not having enough space on system drive. Deleting orphaned files could also prove to be difficult since it is very hard to distinguish which file belongs to which program (they have some random names).

Weird defaults

There is also issue that when you first create a part, it's compression option is set to not compressed. That did surprised me since one of advantages of zip packaging is small size. Since every disk access slows things down, having file compressed is advantage.

Since every part is can have separate compression option, I tend to set them to Normal for most of it. Only if I know that something is very random (encrypted or sound), I set it to no compression. Speed is little bit slower when reading compressed data but I am still to find computer so slow that I have issue with uncompressing data.

To use it or not

I do hope that next version of framework will optimize some things (e.g. just get rid of temporary files). However, I will use it nevertheless since it does make transfer of related files a lot easier. Just be careful that there is enough space on system drive and everything should be fine.

If you want to check it, there is simple sample code available.